Mastering Incrementality Testing Paid Social Campaigns

Mastering Incrementality Testing Paid Social Campaigns

Understanding the true impact of your advertising spend is crucial for any marketer, and incrementality testing paid social campaigns provides the definitive answer. This advanced measurement technique helps businesses determine the actual value generated by their paid social efforts, going beyond last-click attribution to reveal what would have happened without your ads. By isolating the causal effect of your campaigns, incrementality testing empowers data-driven decisions, optimizing budgets for maximum return. It’s about proving that your paid social investment isn’t just reaching users, but genuinely driving new conversions and revenue that wouldn’t have occurred otherwise.

What is Incrementality Testing for Paid Social Ads?

Incrementality testing for paid social ads is a scientific method used to measure the true causal impact of advertising campaigns by comparing the behavior of an exposed group to a control group. This approach moves beyond traditional attribution models, which often overstate performance by crediting conversions that might have happened organically. Incrementality testing specifically isolates the “lift” or additional conversions directly attributable to the ad exposure. It answers the fundamental question: “How many extra conversions did my paid social campaign generate that wouldn’t have occurred otherwise?” For more insights, check out our guide on Digital Marketing Services.

Defining Incrementality and Its Importance

Incrementality refers to the net new business outcomes, such as sales, leads, or app installs, that are directly caused by a marketing intervention. In the context of paid social, it helps marketers understand if their ad spend is genuinely driving additional value. Without incrementality testing, marketers risk misallocating budgets to campaigns that appear successful but are merely capturing existing demand or conversions that would have occurred anyway. This method provides a more accurate picture of ROI.

Distinguishing Incrementality from Attribution Models

Traditional attribution models, like last-click or first-click, assign credit to specific touchpoints in a customer journey. While useful for understanding path to conversion, they don’t prove causation. For example, a user might see a Facebook ad, then later search for the product and convert. Last-click attribution would credit organic search. Incrementality testing, however, would isolate whether that Facebook ad caused the user to convert at all, regardless of the final touchpoint. It focuses on the “did it happen because of the ad?” rather than “which ad got the last credit?”. This distinction is vital for accurate budget allocation and strategic planning.

How Does a Paid Social Lift Test Work in Practice?

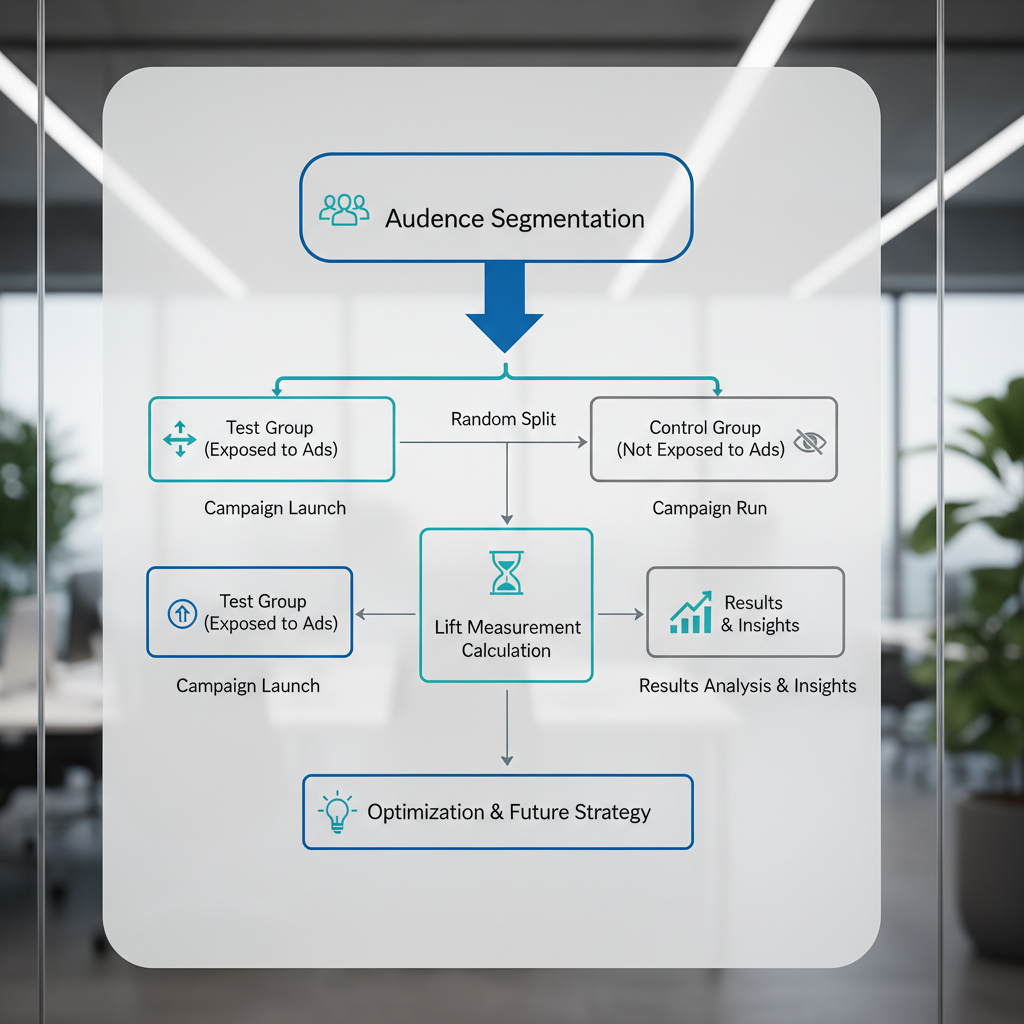

A paid social lift test typically involves dividing an audience into two statistically similar groups: a test group exposed to the paid social campaign and a control group that is intentionally not exposed to the campaign. By comparing the conversion rates or other key metrics between these two groups, marketers can quantify the incremental lift generated by their advertising efforts. This method directly measures the additional impact of your social media ads. For more insights, check out our guide on Digital Marketing Services.

Setting Up Your Holdout Group for Accuracy

The cornerstone of any incrementality test is the creation of a robust holdout group. This group should be as similar as possible to the exposed group in terms of demographics, past behavior, and intent. For paid social, platforms like Meta (Facebook and Instagram) offer tools to create these control groups, often through geo-testing, ghost ads, or pixel-based suppression. The goal is to ensure that the only significant difference between the two groups is the exposure to your paid social ads. Careful segmentation prevents confounding variables from skewing results. Many platforms facilitate this by allowing advertisers to exclude specific audience segments from seeing certain campaigns, effectively creating a control.

Key Metrics and Measurement Methodologies

When conducting a paid social lift test, several key metrics are observed and compared:

* Conversion Rate: The percentage of users who complete a desired action (e.g., purchase, sign-up).

* Revenue: The total income generated.

* Average Order Value (AOV): The average amount spent per transaction.

* Return on Ad Spend (ROAS): The revenue generated for every dollar spent on advertising.

The primary methodology involves calculating the difference in these metrics between the test and control groups. For example, if the test group has a 5% conversion rate and the control group has a 4% conversion rate, the incremental lift is 1%. Statistical significance testing is then applied to determine if this difference is truly due to the campaign or merely random chance. This rigorous approach ensures that conclusions drawn from the data are reliable and actionable.

Why is Meta Ads Incrementality Measurement Essential?

Meta ads incrementality measurement is essential because it provides a true understanding of the value your Meta ad spend delivers, moving beyond platform-reported metrics that can often overstate performance due to overlapping audiences and last-touch attribution. It helps advertisers optimize budgets by identifying which campaigns genuinely drive new conversions. This is particularly critical in an ecosystem where user journeys are complex and cross-device.

Challenges with Traditional Meta Attribution

Meta’s own attribution models, while powerful for campaign management, primarily report on conversions where a Meta ad was a touchpoint within a specified window. This can lead to several issues:

* Over-attribution: If a user sees a Meta ad, then converts through another channel (e.g., organic search), Meta might still claim credit.

Lack of Causation: It doesn’t prove the ad caused the conversion, only that it was involved*.

* Cross-Platform Blind Spots: Meta’s data is limited to its own ecosystem, making it difficult to understand its contribution relative to other channels.

These challenges mean that relying solely on Meta’s reported ROAS might lead to inefficient budget allocation, as you could be spending on ads that don’t actually generate new business.

How Incrementality Enhances Meta Campaign Optimization

By implementing incrementality testing, marketers gain a clearer picture of their Meta campaigns’ true impact. This enables:

* Budget Reallocation: Shifting spend from campaigns with low or no incremental lift to those that demonstrate strong incremental value.

* Creative Optimization: Identifying which ad creatives and messaging genuinely resonate and drive new actions.

* Audience Refinement: Understanding which audience segments are most incrementally responsive to Meta ads.

* Strategic Planning: Informing broader marketing strategies by understanding Meta’s unique contribution to the overall marketing mix.

For instance, if a broad targeting campaign shows high reported ROAS but zero incremental lift, it suggests the campaign is merely reaching users who would convert anyway. Conversely, a niche campaign with lower reported ROAS but significant incremental lift might be a more valuable investment. This granular insight allows for more intelligent optimization of Digital Marketing Services.

Designing an Effective Campaign Incrementality Measurement Strategy

An effective campaign incrementality measurement strategy requires careful planning, a clear understanding of your objectives, and the right tools and methodologies to ensure accurate and actionable results. It’s about creating a structured approach to validate your marketing investments. This strategic framework helps to quantify the true impact of your advertising efforts.

Choosing the Right Incrementality Test Type

There are several types of incrementality tests, each suited for different scenarios:

* Geo-Lift Tests: Dividing geographic regions into test and control groups. Ideal for campaigns targeting specific locations.

* Ghost Ad/Pixel Suppression Tests: Serving “ghost” ads (ads that appear but are not clickable) to a control group, or suppressing ad delivery to a pixel-based audience. Effective for measuring the impact of specific ad sets or audiences.

* Matched Market Tests: Identifying markets with similar characteristics and exposing one to a campaign while holding the other as a control.

* User-Level Randomization: Randomly assigning individual users to test or control groups. This is often the most precise but can be harder to implement at scale without advanced platforms.

The choice depends on factors like budget, target audience, campaign structure, and available platform capabilities. For instance, geo-lift tests are excellent for brand awareness campaigns across regions.

Key Considerations for Test Design and Duration

Successful incrementality testing hinges on meticulous design:

1. Define Clear Hypotheses: What specific impact are you trying to measure? (e.g., “Campaign X will increase purchases by 10%”).

2. Determine Sample Size: Ensure enough users in both test and control groups to achieve statistical significance. This prevents false positives or negatives.

3. Establish Baseline Data: Collect pre-test data to understand normal conversion trends and identify any existing differences between groups.

4. Set Test Duration: Tests need to run long enough to capture a full conversion cycle and account for seasonality or external factors. Too short, and results might be inconclusive; too long, and you risk external influences.

5. Minimize Contamination: Ensure the control group remains truly unexposed. This is often the biggest challenge.

6. Select Metrics: Focus on a few key performance indicators (KPIs) that directly align with your campaign goals.

By carefully considering these factors, marketers can design tests that yield reliable and impactful insights.

Overcoming Challenges in Holdout Test Marketing for Social

Implementing a holdout test marketing strategy for paid social campaigns comes with inherent challenges, primarily related to audience segmentation, data privacy, and the dynamic nature of social media platforms. Addressing these issues is crucial for obtaining accurate and actionable incrementality results. Overcoming these hurdles ensures the integrity of your measurement.

Addressing Data Privacy and User Tracking Limitations

The increasing focus on user privacy, driven by regulations like GDPR and CCPA, along with changes in tracking technologies (e.g., Apple’s ATT framework), presents significant hurdles for incrementality testing. It becomes harder to create truly distinct test and control groups at the individual user level, especially across different platforms and devices.

* Privacy-Enhancing Technologies: Marketers must increasingly rely on aggregated, privacy-safe methods like differential privacy or synthetic data.

* Platform-Provided Solutions: Leveraging built-in incrementality tools offered by social platforms (e.g., Meta’s Brand Lift or Conversion Lift studies) can help, as these operate within the platform’s privacy boundaries.

* Server-Side Tracking: Implementing server-side tracking can improve data accuracy by reducing reliance on client-side cookies, which are more susceptible to privacy restrictions.

These adaptations are vital to maintain measurement capabilities in a privacy-first world.

Ensuring Statistical Significance and Avoiding Contamination

Achieving statistical significance means ensuring that the observed differences between your test and control groups are not merely due to random chance. This requires:

* Adequate Sample Size: Running tests with enough users and conversions to detect a meaningful difference.

* Proper Randomization: Ensuring users are truly randomly assigned to groups to minimize bias.

* Contamination Prevention: This is particularly difficult in social media. Users in a control group might still be exposed to organic content, word-of-mouth, or ads from other channels. Strategies include:

* Geo-based testing: Physically separating test and control regions.

* Dark Post/Ghost Ad Methodology: Showing a non-clickable “ghost” ad to the control group to simulate ad exposure without actual interaction.

Exclusion Lists: Carefully excluding control group members from any* paid exposure.

Comparison of Incrementality Test Types

| Test Type | Pros | Cons | Best For |

| :———————— | :—————————————————————— | :——————————————————————— | :———————————————————————– |

| Geo-Lift Test | Clear separation, strong for brand campaigns, less prone to cookie issues. | Requires significant budget, longer duration, assumes geo-uniformity. | Broad campaigns, brand awareness, testing new market entry. |

| Pixel Suppression | Precise audience control, good for direct response. | Relies on pixel data, privacy concerns, potential for cookie blocking. | Retargeting campaigns, specific audience segments. |

| Ghost Ad Test | Mimics ad exposure without actual interaction, good for creative. | Complex setup, can be resource-intensive. | Creative testing, understanding ad recall without conversion intent. |

| Matched Market Test | Strong for regional campaigns, accounts for market nuances. | Finding truly matched markets is challenging, external factors can vary. | Regional product launches, localized campaigns. |

By carefully selecting the test type and implementing robust controls, marketers can overcome many common challenges in social media incrementality testing.

Interpreting and Acting on Incrementality Results

Interpreting the results of your campaign incrementality measurement is not just about looking at a single number; it requires a nuanced understanding of statistical significance, confidence intervals, and the broader business context. The true value lies in translating these insights into actionable strategies that optimize your paid social spend. This phase is critical for turning data into improved performance.

Understanding Statistical Significance and Confidence

When you run an incrementality test, you’ll observe a difference in performance between your test and control groups. However, this difference might be due to random chance rather than your campaign.

Statistical Significance: This indicates the probability that the observed difference is not* due to random chance. A common threshold is p < 0.05, meaning there's less than a 5% chance the results are random. If your results are statistically significant, you can be reasonably confident that your campaign had a real effect.

* Confidence Interval: This provides a range within which the true incremental lift likely falls. For example, if a test shows an incremental lift of 15% with a 95% confidence interval of +/- 3%, it means you are 95% confident that the true lift is between 12% and 18%.

Both metrics are crucial for making informed decisions. A small lift might be statistically significant, but if the confidence interval is wide, it suggests less precision.

Translating Insights into Actionable Paid Social Strategies

Once you have statistically significant and reliable incrementality results, the next step is to translate them into tangible actions:

* Budget Optimization: If a campaign shows strong incremental lift, consider increasing its budget. Conversely, if a campaign has high reported ROAS but zero or negative incrementality, reduce or reallocate its spend. This is the core benefit of understanding your paid social lift test outcomes.

* Creative and Audience Refinement: Analyze which creatives, messaging, or audience segments delivered the highest incremental value. Double down on these successful elements and iterate on underperforming ones.

* Channel Allocation: Use incrementality data to compare the true effectiveness of paid social against other marketing channels. This helps in building a more efficient overall media mix.

* Long-Term Strategy: Incrementality testing isn’t a one-time event. Integrate it into your ongoing measurement framework to continuously learn and adapt your strategies.

* Testing New Initiatives: Before scaling new campaigns or targeting strategies, run incrementality tests to validate their true impact. This prevents costly mistakes.

By systematically applying these insights, marketers can ensure their paid social investments are driving genuine business growth and not just cannibalizing existing demand.

What is the main difference between attribution and incrementality?

Attribution models assign credit to touchpoints in a conversion path, showing where a conversion happened. Incrementality measures the causal effect of an ad, determining if a conversion would have occurred without the ad. Attribution answers “which touchpoint got credit?” while incrementality answers “did the ad cause a new conversion?”.

Why can’t I just trust platform-reported ROAS for paid social?

Platform-reported ROAS can be misleading because platforms often take credit for conversions that might have happened organically or through other channels. They track interactions within their ecosystem, which doesn’t account for users who would have converted anyway. Incrementality provides a more accurate, unbiased view of true performance.

How long should an incrementality test run?

The duration of an incrementality test depends on your conversion cycle and the volume of conversions. Generally, tests should run for at least 2-4 weeks to gather enough data and account for weekly fluctuations. Longer cycles may require longer test durations to capture the full impact and achieve statistical significance.

Is incrementality testing only for large budgets?

While larger budgets provide more data and make statistical significance easier to achieve, incrementality testing can be adapted for smaller budgets. Geo-lift tests or phased rollouts can be viable options. The key is to design a test that can still yield statistically significant results given your resources and audience size.

What is a “ghost ad” in incrementality testing?

A “ghost ad” is a technique where a control group is exposed to an ad that appears in their feed but is not clickable or interactive. It simulates ad exposure without allowing conversion, helping to measure the psychological or brand lift impact of simply seeing an ad versus interacting with it. This helps isolate ad recall from direct response.

Can I run incrementality tests across different social platforms simultaneously?

Running cross-platform incrementality tests is complex but highly valuable. It often requires advanced measurement solutions or a combination of platform-specific tests. The challenge lies in creating truly independent control groups across different walled gardens. However, understanding the incremental value of each platform is crucial for holistic media mix optimization.

Unlocking the true value of your paid social investment hinges on embracing incrementality testing. By moving beyond surface-level metrics and delving into the causal impact of your campaigns, you gain unparalleled clarity on what truly drives business growth.

Key takeaways for your paid social strategy:

Prioritize Causation over Correlation: Understand what actually causes* conversions, not just what correlates with them.

* Optimize for True Lift: Reallocate budgets to campaigns and creatives that demonstrate genuine incremental value.

* Embrace Holdout Methodologies: Utilize control groups to isolate the true impact of your advertising efforts.

* Leverage Platform Tools: Explore built-in incrementality solutions offered by platforms like Meta for deeper insights.

* Continuously Test and Learn: Integrate incrementality into your ongoing measurement framework for sustained optimization.

By adopting a rigorous approach to incrementality testing paid social, you can transform your marketing efforts from guesswork to a precise science, ensuring every dollar spent works harder for your business. Start measuring your true impact today and drive more efficient, effective growth.